TL;DR:

3 common deployment failures can be quickly detected and often prevented by automating end-to-end acceptance testing as part of the continuous integration process.

1. Database schema/model changes

Web applications and APIs that rely on a database inevitably change their schema or data layout. Changes to models and schema migrations can be extremely tricky and often have consequences which are difficult to predict, causing bugs, failures, and web service disruptions.

In an ideal world, failures related to schema migrations would be preventable. Sadly, bugs will still make their way into production in unpredictable ways. It's important to identify failure scenarios as fast as possible in production & non-production environments.

The ideal time to automatically run end-to-end tests against a web app is immediately after a deployment. This is especially true for teams who practice some form of continuous deployment; a wide surface-area of the application can be tested almost instantly after every deploy.

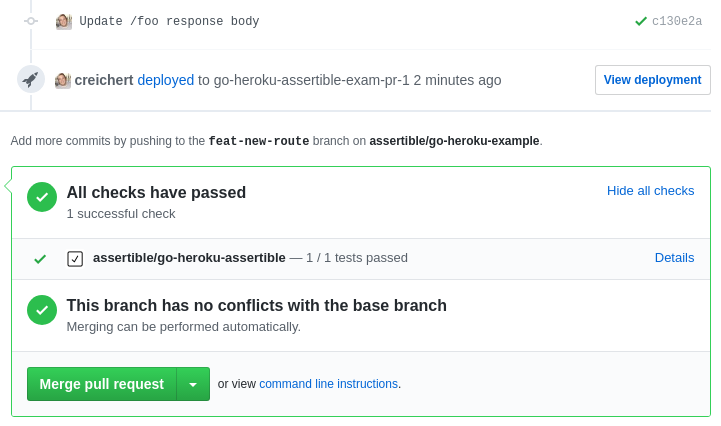

At Assertible, we test every single push to any branch of our code using the GitHub deployment integration. Using this integration, we can see the status of all our tests in each GitHub PR.

Every push to a branch deploys our web app and API to a staging

environment. When a branch is merged into master, the code is built

and deployed to our production environment, where we run a wide

range of tests against our web app and API.

If a deployment contains code that require model changes, we are able to execute our migrations against a staging database which mimics as much of our production systems as possible. When failures occur, we know instantly and can react accordingly.

By using this strategy, nearly all unexpected schema migration disruptions occur on a staging environment which allows us to adjust our patches accordingly.

In cases where performance, downtime, or data loss are big factors in maintaining backwards compatibility, we use branches dedicated to **only** the schema migration. We write our schema migrations in a backwards compatible way so follow-up branches can utilize the new models without disrupting the old models.

For teams that run migrations manually using SQL scripts, you can invoke a trigger url for your web service to run all the tests against your entire service immediately. Trigger URLs can be utilized from any point in your database maintenance process.

In all cases, you can receive failure notifications to Slack and email or check the Assertible dashboard

Deployment tips

Make the smallest model changes possible

Use version control to record changes to the database

Have a rollback process in place and test it frequently

2. Service integration failures

Many web apps and APIs rely on external services to varying degrees. Services may be in the form of external APIs like the GitHub or Stripe, micro-services, or webhooks. These are all dependencies that reflect a point of failure in a modern web application architecture.

At Assertible, we solve integration failures using:

Unit and acceptance tests

We have isolated unit and acceptance tests which run during our continuous integration build phase. These tests use mocks for HTTP services and other outgoing requests. We make extensive use of mocking HTTP requests in our CI tests to represent external services.

We also have various acceptance tests which submit mocked versions of webhooks to handlers, ensuring they respond properly. This is all done during the build & test portion of our continuous integration pipeline.

Post-deployment / end-to-end tests

Part of our post-deployment testing process includes tests specifically designed for API endpoints and features which rely on external service integrations and webhooks. We use Slack alerts to immediately notify our team when anything goes wrong.

Event logging

As a final protection against unknown failures, we try to log all corner-cases, exceptions, and unexpected error events (using Rollbar). This lets us know immediately when an unexpected error is hit and the corresponding metadata allows us to reproduce the test using Assertible.

3. Insufficient manual testing

Manual testing is generally done incrementally throughout the lifecycle of an app. On many teams, developers manually test each new feature as it's deployed. Larger enterprises may have a QA team with a more specialized set of tools but the process is still the same. As new features are built, only the newest and most critical features are tested, leaving holes in test coverage.

Problems with manual testing

- Time consuming

- Error prone

- Difficult to measure

The process of manually testing a web app each time it's deployed works well for single features which are new, but doesn't scale well for non-trivial apps that change frequently.

For each feature and bug our team develops, we write a new test using Assertible. We execute these tests every single time we deploy our web app and API to a staging or production environment. These tests then continue running on a schedule.

This has significantly reduced the burden of testing an application with a larger surface area and growing number of features and dependencies.

Automated testing best practices

Here's a more specific breakdown of how we use Assertible to test and identify various deployment failure scenarios:

Run tests after each and every deployment

Specifically, we use the Assertible GitHub deployment integration As mentioned above, we deploy all branches to a staging environment (as pull requests made through GitHub). Doing this maintains a robust continuous deployment pipeline where all pushes have several layers of testing; from unit tests to end-to-end tests.

Run tests on a schedule

Along with running tests on every deployment, we also run tests frequently on schedules. In the future we plan to expand the capabilities to run different types of tests at different frequencies. For example, tests that create and delete data should be run less often on production systems.

Write tests for new features

We create a new Assertible test for all new changes to our web app or API. A variety of workflows are tested and we're working towards having 100% API coverage.

We do NOT consider end-to-end tests a replacement for testing before deployment. We believe testing immediately after building and before deploying is crucial to keeping software high-quality and should be the first line of defense against bugs and regressions, followed by other kinds of integration tests and Assertible tests.

Write a test for each new regression or bug

We create new Assertible tests when we find bugs and regressions in our own code base. This helps us make sure they don't pop up again. We keep a record of all links to our issue tracker and pull requests in a test's description to conveniently lookup extended information about a specific test.

:: Christopher Reichert

Categories

The easiest way to test and

monitor your web services

Reduce bugs in web applications by using Assertible to create an automated QA pipeline that helps you catch failures & ship code faster.

Recent posts

Tips for importing and testing your API spec with Assertible 05/26/2020

New feature: Encrypted variables 10/30/2019

New feature: Smarter notifications 5/17/2019