An increasing number of businesses rely on APIs and web services as a core part of their business or to accomplish strategic goals. Because of this trend in API and web service usage, it's imperative for API and web service providers to maintain extremely high levels of availability and quality assurance.

There are a many high-quality tools that can help teams accomplish the goal of maintaining and testing their APIs including Assertible and Runscope. In this post I will outline why Assertible is a good fit for teams to test APIs and illustrate precisely how Assertible improves on features that both Assertible and Runscope offer.

- Web service based testing

- Reproducible and deterministic tests

- Truly codeless testing

- First-class continuous integration and delivery automation

- Simplified request and response debugging

Web service based testing

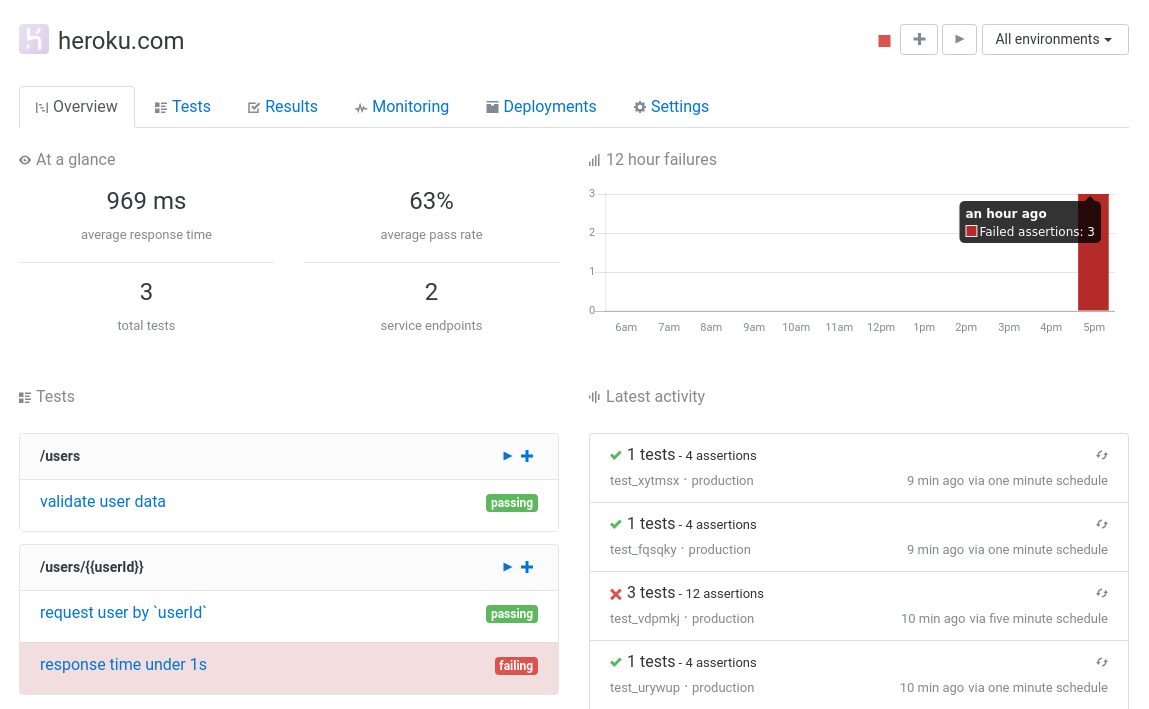

Web services are a first-class citizen in Assertible and many other concepts, such as tests and schedules, are intuitevly built around them. Assertible is very opinionated about what is being tested and most of the Assertible dashboard revolves around the concept of a web service context.

Runscope, on the other hand, is less opinionated on which web service is being tested. For example, their overview page can arbitrarily support any number of tests from various web services, making it difficult to find what you need.

In practice, this means that all tests defined in Assertible under a web service are specifically designed to test only that web service even though you can make HTTP requests to any URL to setup data, fetch auth tokens, etc. In Runscope, tests can span an arbitrary number of web services.

Because Assertible chooses to be opinionated in this regard, it has several distinct advantages over Runscope:

- Logical separation of concerns. Each web service has it's own tests, integrations, schedules, deployments, and settings.

- Intuitive mental model of what exactly is being tested.

- Easier to view metrics and failures for a single web service.

- Error notifications and alerts accurately identify a specific failing or degraded web service.

- Modeling environments, or specific instances of the web service, is trivial to organize.

- Importing Swagger definitions or Postman collections to one web service models most real world use-cases (Swagger definitions don't span multiple web services).

Reproducible and deterministic tests

In Assertible, tests are designed to be simple, reproducible, and easy to reason about. Effectively, a test is design to test a single HTTP request. Although other requests can be used to setup test data and dynamic variables, assertions are designed to test the primary HTTP request for the test against a specific web service.

We've explicitly chosen this design because it has several advantages:

- Tests are reliable, deterministic, and hermetic

- Tests are fast and capable of being run concurrently

- Failures are isolated and easy to reason about

- Side-effects from previous steps are minimized, each test is capable of setting up it's own dependencies

- Error messages are extremely specific and provide sufficient context to start acting on the failure without loading the dashboard.

- Intuitive mental model of test execution

Runscope tests can span an arbitrary number of steps which can become complicated and difficult to reason about. The Runscope approach prioritizes flexibility and can model a complicated series of requests or end-to-end workflow.

In practice, complicated test sequences are sometimes necessary but often flaky. There's some evidence that suggests complicated tests are not a good fit for the majority of testing workflows.

For example, the Google Testing Blog's post, Just Say No to More End-to-End Tests, illustrates why isolated reproducible tests are almost always preferred to more complicated testing sequences.

Don't get me wrong. Complex test sequences are the only way to model some end-to-end test cases and a tool like Runscope can make this possible. However, for a large portioin of testing needs, isolated, reproducible, and simple tests are reliable and have significantly fewer false-positives and flaky results.

While we plan on expanding our setup and teardown concepts to support an arbitrary number of steps, we've found that limiting our tests to the least amount of steps possible is extremely empowering because it gives us access to more specific error messages and a quicker understanding of new problems due to a more simple mental model of the test sequence.

Truly codeless testing

Assertible aims to be truly codeless by default. While we do have features that support running tests programmatically from command-line scripts and build pipelines, the core Assertible product aims to be configurable with no code by default.

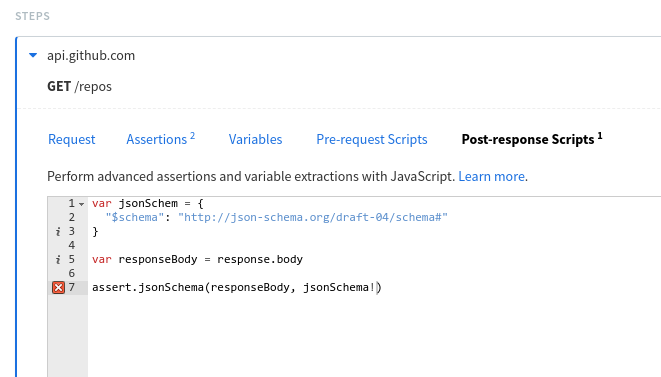

Many features in Runscope, on the other hand, rely on custom Javascript code snippets to achieve testing goals. For example, in Runscope validating a JSON Schema must be accomplished manually using Javascript code.

In Assertible JSON Schema validation is a turn-key operation fully supported in the user-interface:

In the Runscope example, it's necessary to assign the schema to a

var, extract the response body manually, and run the assertion using

Javascript code. In the Assertible example, the JSON Schema just needs

to be copied into the Schema definition input.

There other examples in the Runscope docs that refer the user to write a custom script to achieve a goal. Another example would be calculating the length of a JSON array. The Runscope blog recommends using a custom script whereas Assertible has support for first-class features that can calculate the length of a JSON array.

While the Runscope approach is very flexible, it can also incur bugs and flakiness by the very nature of having to manually write code (anyone every had a bug in a seemingly "obvious" script?). These issues can turn into a maintenance burden over time.

Using custom scripts is not without merit and we understand that not everything can be tested using point-and-click assertions. However, the added flexibility makes it difficult to reason about failures and keep a clear mental model of the test when failures do occur.

First-class continuous integration and delivery automation

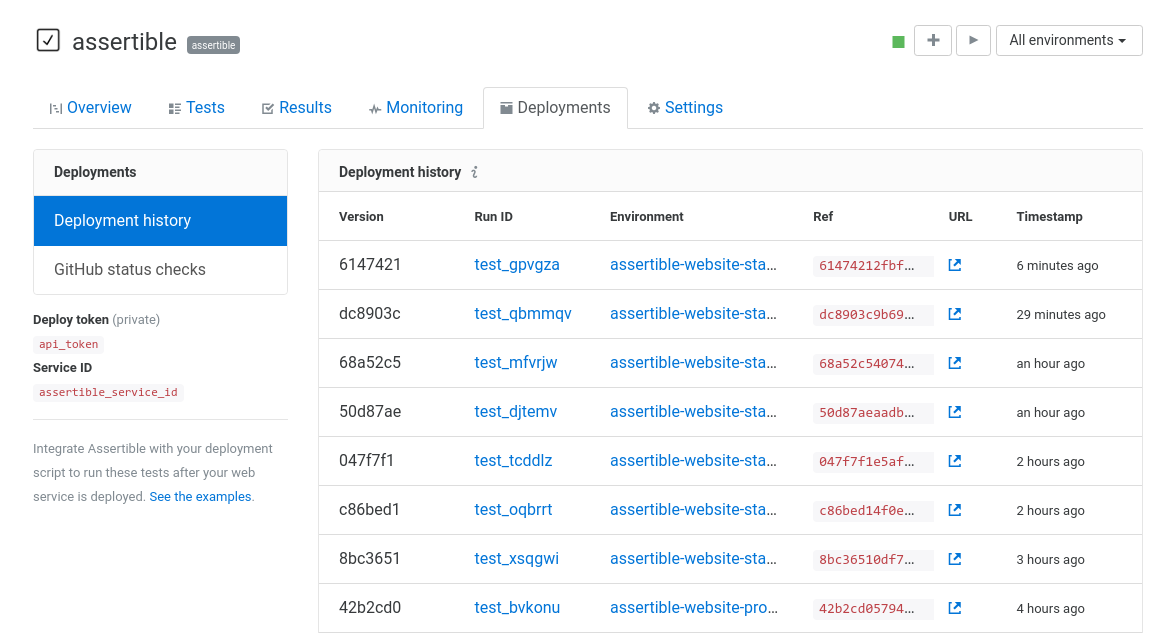

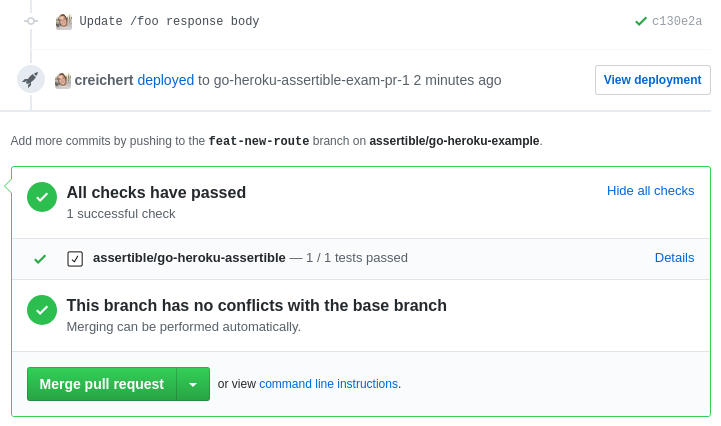

Assertible focuses on automation first and has first-class support for modern DevOps workflows like continuous integration and delivery pipelines. For example, Assertible has an API designed specifically to run tests after deployments from a continuous delivery pipeline called the Deployments API.

The Deployments API is designed to automate API tests after a deployment and save information about the release so that regressions can be tracked and identified by subsequent tests. You can save version numbers, code refs, hyperlinks, and see a complete history of your web service's deployments along with the results of the subsequent tests in Assertible.

Modern tools like GitHub are also directly supported in the Deployments API. Assertible is capable of propagating events to GitHub so that deployment URLs can be linked, and test results can be examined directly from pull requests. This can save a massive amount of time if your team is collaborating on GitHub.

Both Runscope and Assertible have a concept of Trigger URLs which can be used to execute tests from a script. One advantage of Runscope's trigger URLs is that they support batch operations using multiple input variable sets which can be quite useful for a number of cases. It's a feature we would definitely like to Assertible support one day.

Simplified request and response debugging

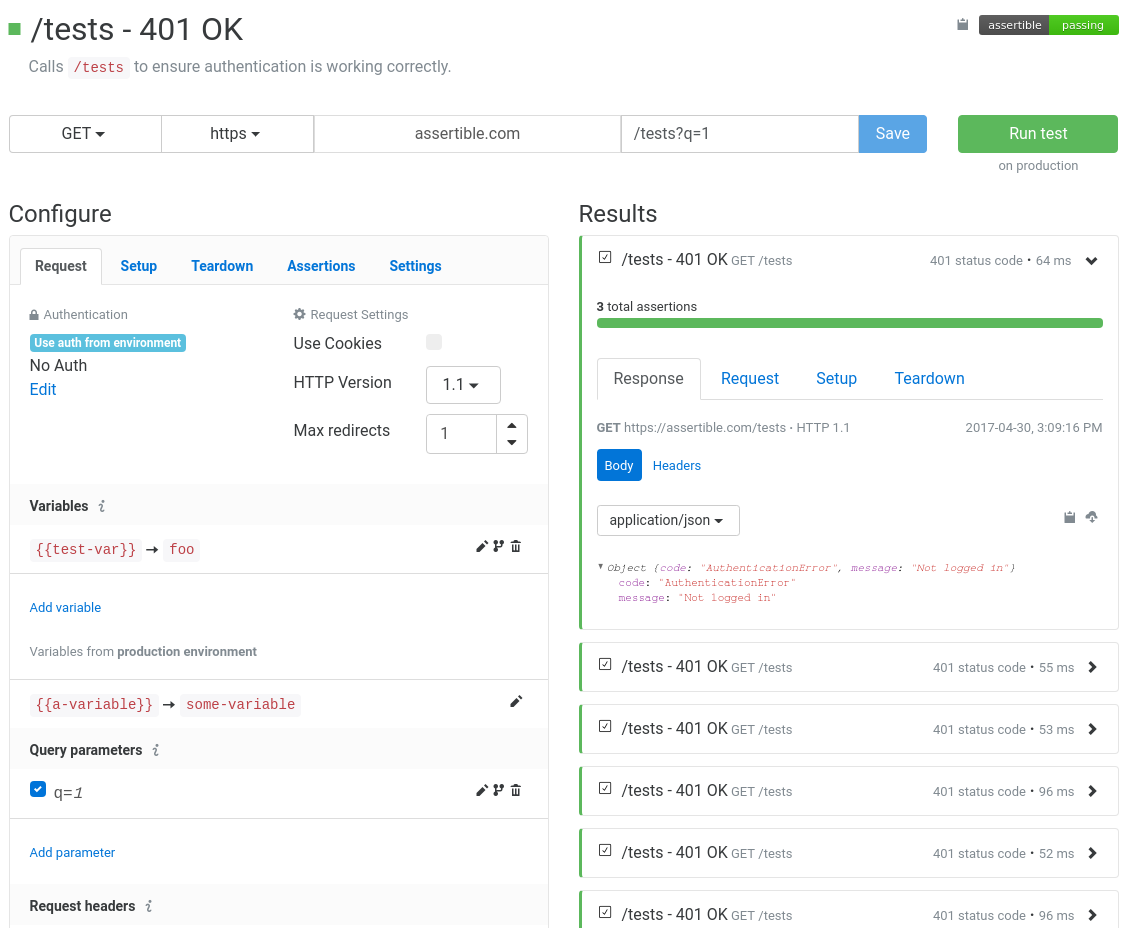

Creating and maintaining test cases can be time-consuming and often tricky to get right in any tool. We have focused a lot of effort to make debugging and configuring test HTTP requests simple and intuitive in Assertible.

For example, the ability to enable/disable query parameters and headers makes it easy to quickly view the difference between two API responses (one with and one without). Doing this is tedious in Runscope because you have to create and delete the header or query parameter each time you run the test.

One of our biggest user-experience goals on the Assertible test configuration page was to make all relevant HTTP request/response data and assertions available with as few clicks as possible. In Assertible, it's trivial to see all variables, headers, and query parameters that are in scope for a test as well as a recent history of test results.

In Runscope, however, editing a test and analyzing the results is repetitive and takes several clicks to get the full picture. For example, when editing a test, you only have access to the most recent response which makes it difficult to observe the effect your configuration has on several previous test runs:

The only way to view the latest result in the above image is to click Last Response Data. The layout makes it difficult to interactively work with the results to configure assertions and request information. In Assertible, nearly all HTTP request configuration and response data can be viewed in a single click.

Convinced? Get started testing now!

Assertible is free to use. Contact us if you have any questions or

feedback!

Conclusions

In this post I've outlined some things Assertible does better than Runscope. Of course, this blog post would not be complete if I did not give credit where credit is due. Runscope has some useful features that Assertible does not support:

- On-premise support

- Multi-region testing and monitoring

- Live traffic alerts

- Enterprise features

- Two-factor authentication

- SAML single sign-on support

- Dedicated account management and customer support for enterprise product tier

While I am obviously biased towards Assertible, I've tried to compare the products as fairly as possible throughout this post to exemplify the specific workflows we have improved when building Assertible.

If I have misrepresented or completely misunderstood a Runscope feature, I am happy to update this post. Just contact me on Twitter or using the contact page.

Examples and resources:

- The rise of APIs - TechCrunch

- Just Say No to More End-to-End Tests - Google Testing Blog

- QA in Production - Rouan Wilsenach

- Techniques to reduce api testing errors and improve your QA process.

:: Christopher Reichert

Categories

The easiest way to test and

monitor your web services

Reduce bugs in web applications by using Assertible to create an automated QA pipeline that helps you catch failures & ship code faster.

Recent posts

Tips for importing and testing your API spec with Assertible 05/26/2020

New feature: Encrypted variables 10/30/2019

New feature: Smarter notifications 5/17/2019